16 Oct Hubert AI for image processing & insights

We live in a time of over-production – we over-produce electronics, commodity items, cars but most of all we over-produce information. And we belong in an age in which everybody has a decent camera in their pocket. And a photograph is worth a thousand words, as the saying goes – so it should not come as a surprise that over-production of images is overwhelming.

Obtaining the photograph is no longer a problem. The thorny question is – how to make the best use of our vast collection of images efficiently in the production process? How do we find anything in this ever-growing giant haystack while no-one is able to keep up with the news and no-one is able to bring any order to the collection of images?

Traditionally, we used computers to handle large amounts of data and it wasn’t so long ago that computers were essentially “blind” to visual information – by which we mean that software programs were unable to recognize or identify images. “Seeing” a picture was the exclusive preserve of people. Fortunately, recent advances in AI technology have changed this. With the right tools, we can now entrust computers to analyze and describe photos. It is just a matter of developing the hardware and software to enable machines to keep track of the avalanche of visual information.

In the following article, I will tell you how harnessing AI technology has changed (and is still changing) the way that our company Media Press handles images in its PHOBOSS and HUBERT systems. But first – let’s define the problems that needed to be solved.

- Using our systems, our customers select and crop tens of thousands of photos every day. Can we reduce the costs at the same time as improve the quality by removing humans from the process?

- A huge amount of working hours is taken up describing pictures that are added to any media database. The job of describing and then categorizing graphic images is key to the subsequent task of locating a desired photo held in a database. Can we employ AI to provide a detailed description of a photo? If so, then how do we make sure that images are correctly and automatically tagged?

- Editors spend many hours writing photo captions. And most simply describe the people in any given image. Captions seldom reference objects or locations. Can we use AI to recognize persons featured and automatically generate text? If so, can it be done in multiple languages simultaneously?

- In the print production business, it is quite normal to delete the background and create a cut-out of people from a photo in order to create an attractive layout, with text or other objects wrapping around the head and shoulders or the body. There is a demand for such graphic techniques to be applied in online publications – to produce attractive, Netflix-like covers for VOD or online services. But it is a painstakingly slow business to map out the selected area manually. Maybe AI can help? Of course it can.

Automatic selection and cropping

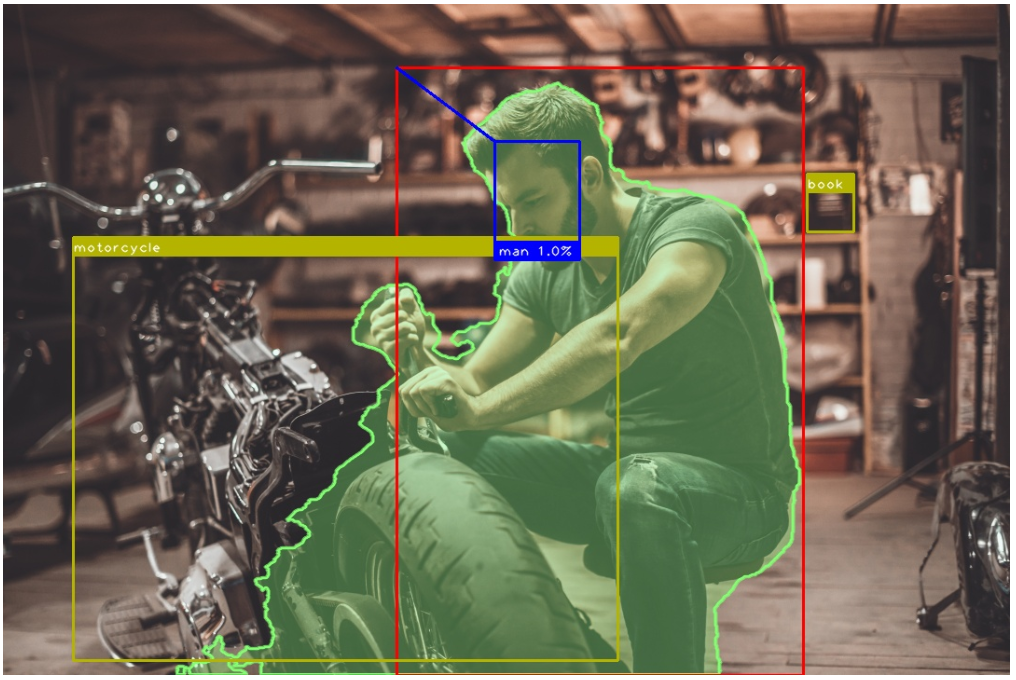

It is a common scenario in the EPG/web world – a customer insists on a photograph being available for every TV programme, selected according to pre-defined criteria, cropped and re-sized to the customer’s UI place-holder requirements. So we are talking about hundreds of thousands of images every week which have to be edited – according to a range of target sizes. How does AI help? Here is a working example:

- Detected the motor mechanic’s face (blue box)

- Detected the mechanic’s outline (red box) and linked it to his face

- Detected the salient parts of the photo (green outline)

- Identified the motorcycle

- Identified the subject’s face as that of a male

Taking all of this information into our system means it is possible to generate different crops of the photo automatically using a range of different ratios. We can take the salient region (motorcycle and the man) and his face, or generate an aesthetically pleasing target image. It is vital that this information is available during the cropping process – otherwise the photo would look like this below. It would be impossible to crop it properly.

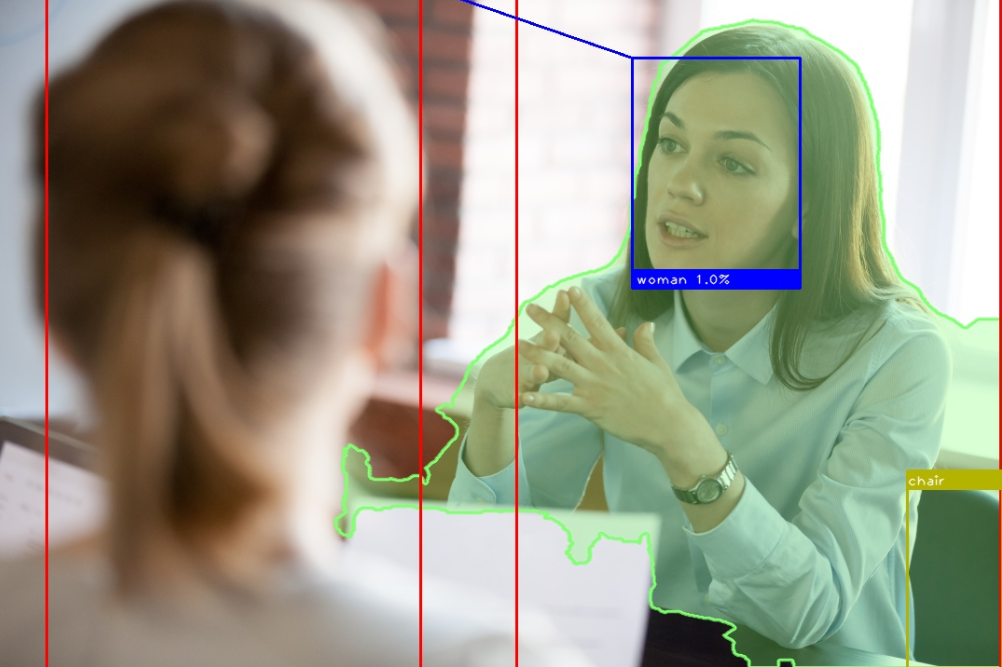

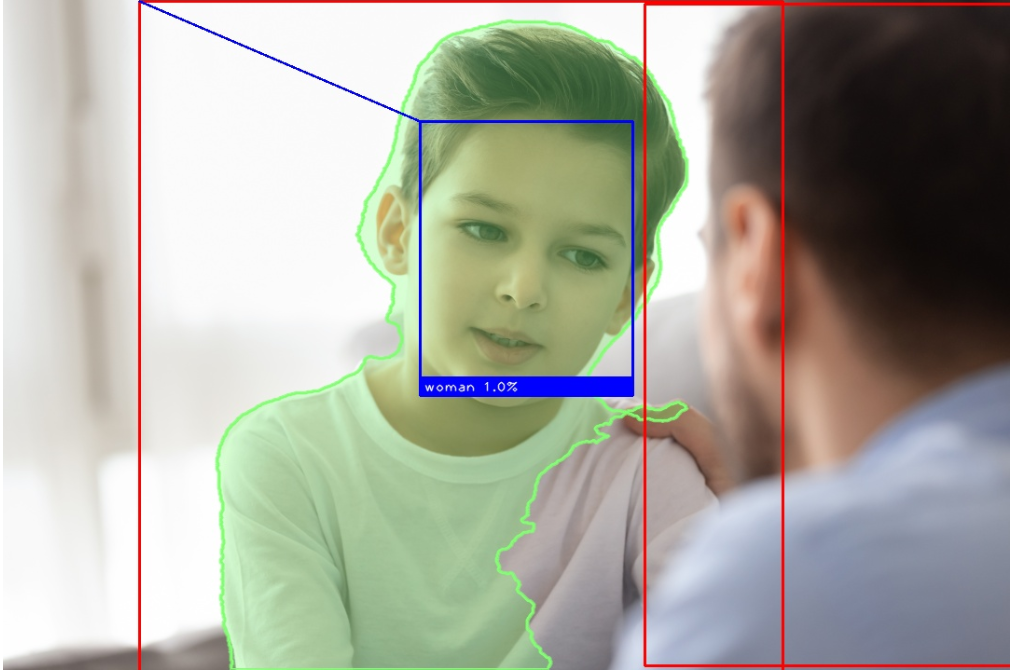

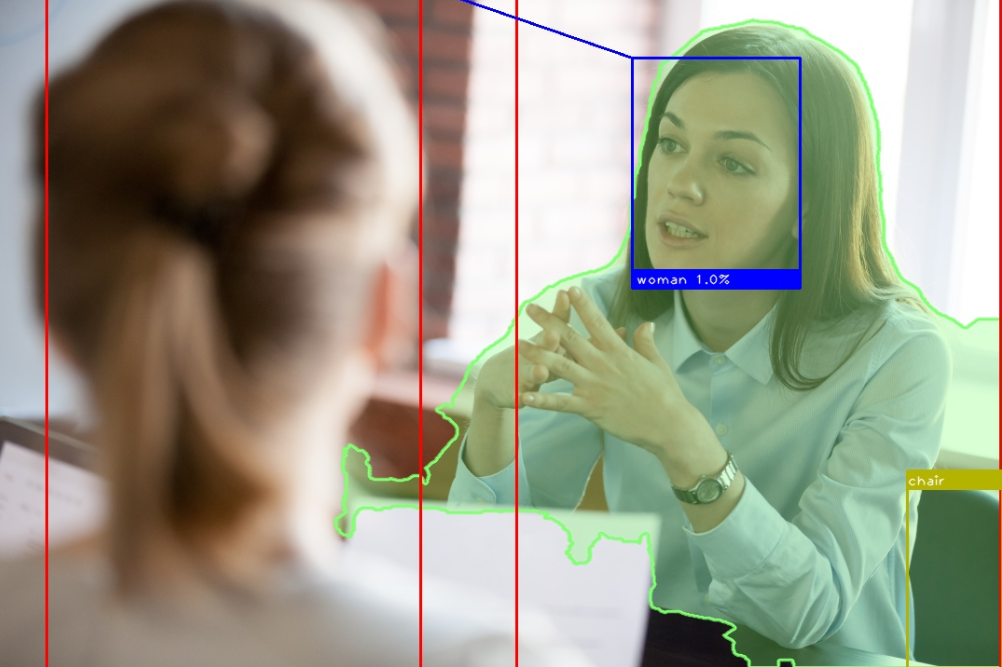

That was the easy part. How about more complex compositions? Let’s take a photo like this:

The composition is asymmetric. There are four persons in the photo. Three are in focus persons and are detected as forming the primary salient region (green outline) but one – who is out of focus – is a secondary subject. In this case, the orientations of the different faces can assist the cropping algorithm to determine where the proper cut should be. But what if there are no people featured in the photo? Are we able to apply an automatic recognition and cropping process in such a situation?

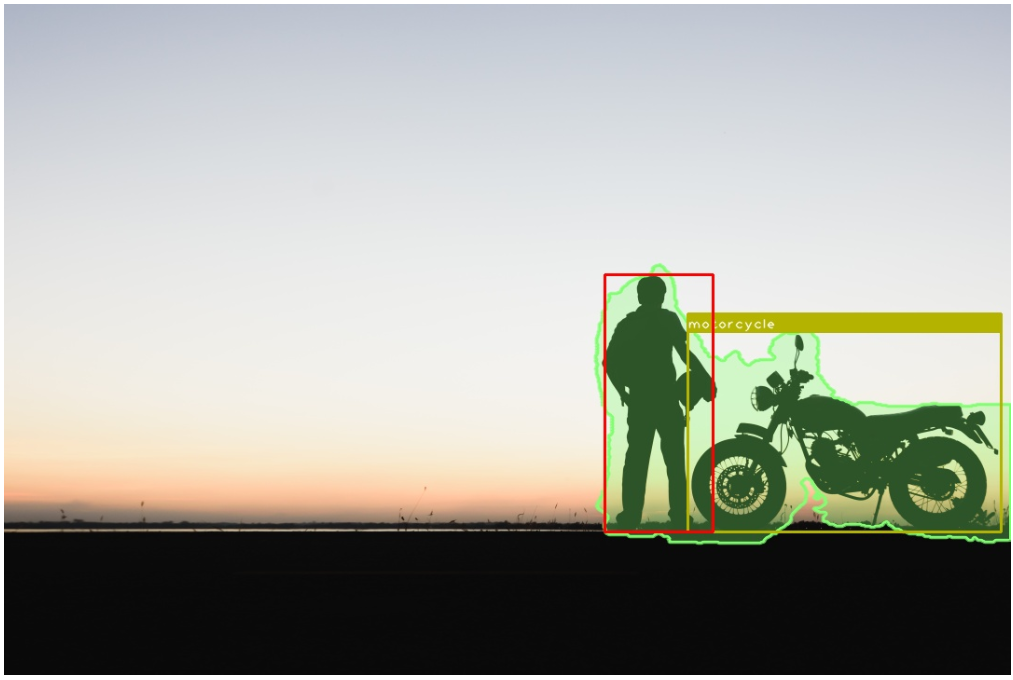

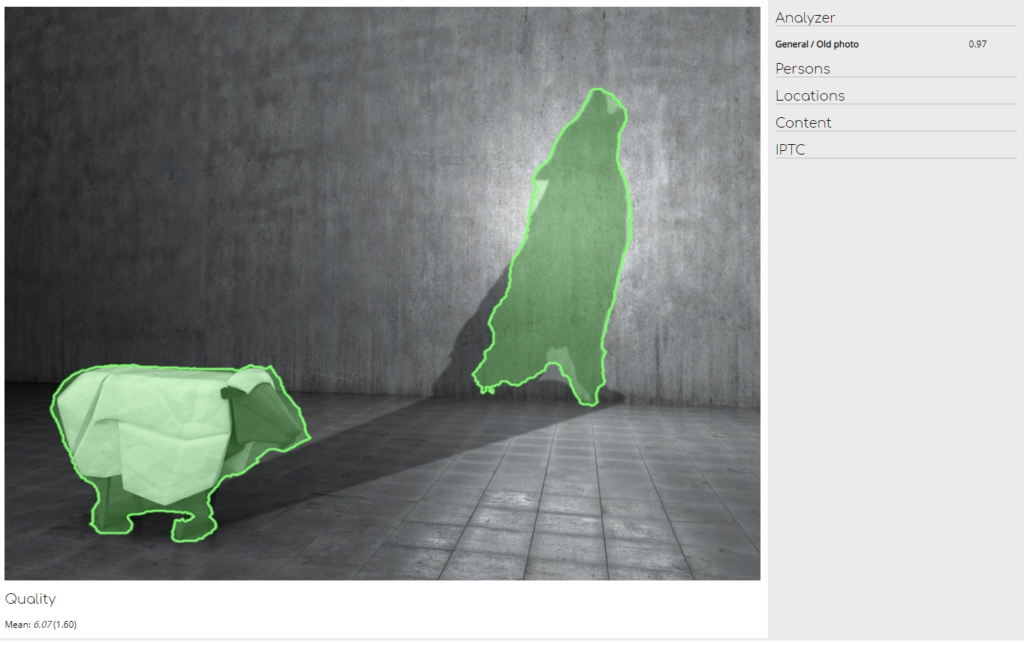

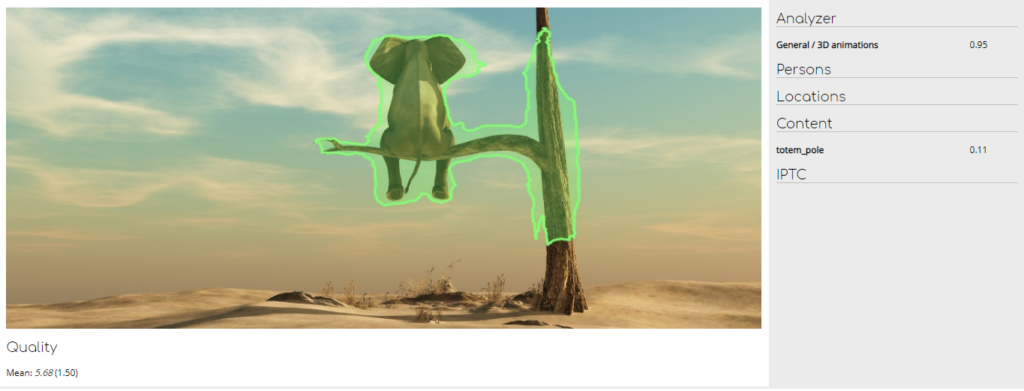

Yes, we can. Thanks to the saliency detection tool, we are able to locate main object in a photo, even if there are no people in it. Additionally, the proper crop can be detected because the system can check what objects are in focus in order to highlight any persons in the background, like this:

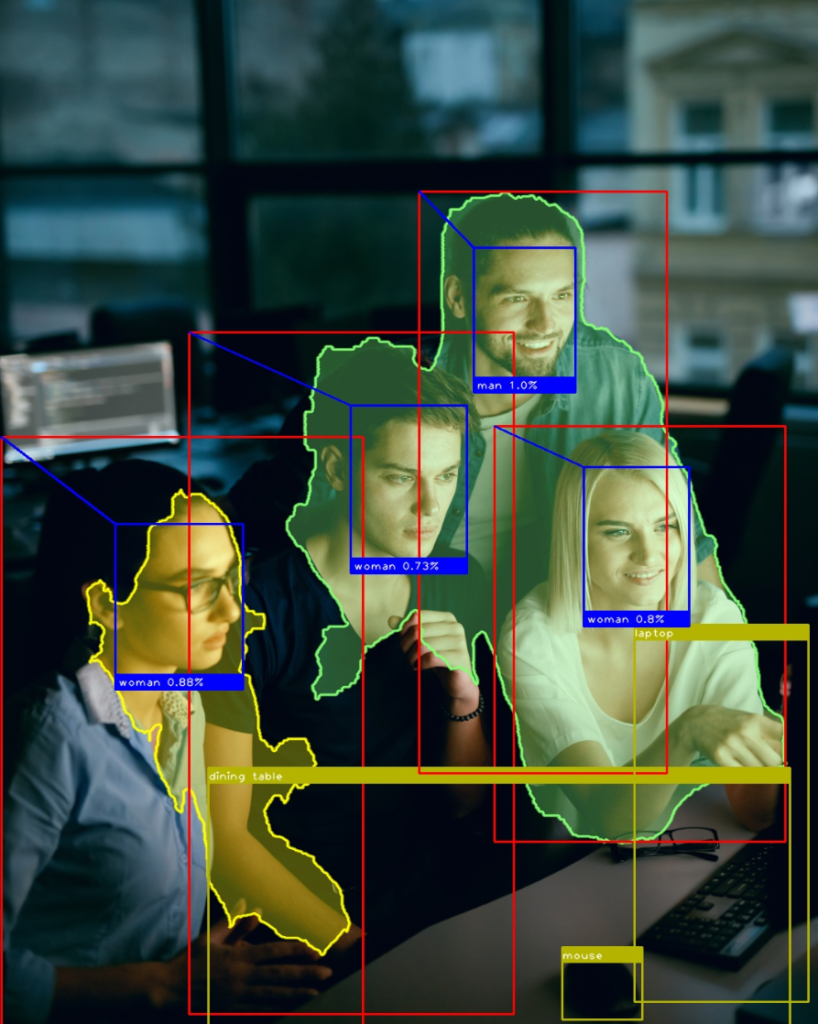

Or more testing compositions such as this one:

In this case, we should note that the image was also identified as a 3D animation.

The other aspect of our story is that the tool is able to automatically select the photo from a content-related collection of images according to rules defined by the customer. As well as this, PHOBOSS DAM applies a powerful copyright handling check. And we can also employ the AI analysis to:

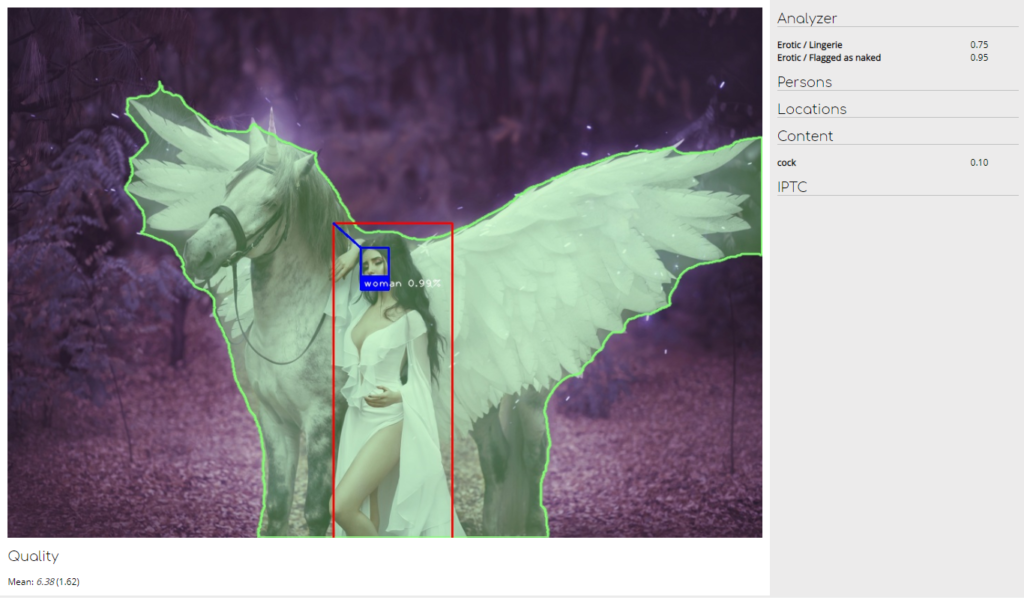

- Discard any erotic-themed images if a customer so wishes

- Avoid photos depicting excessive violence

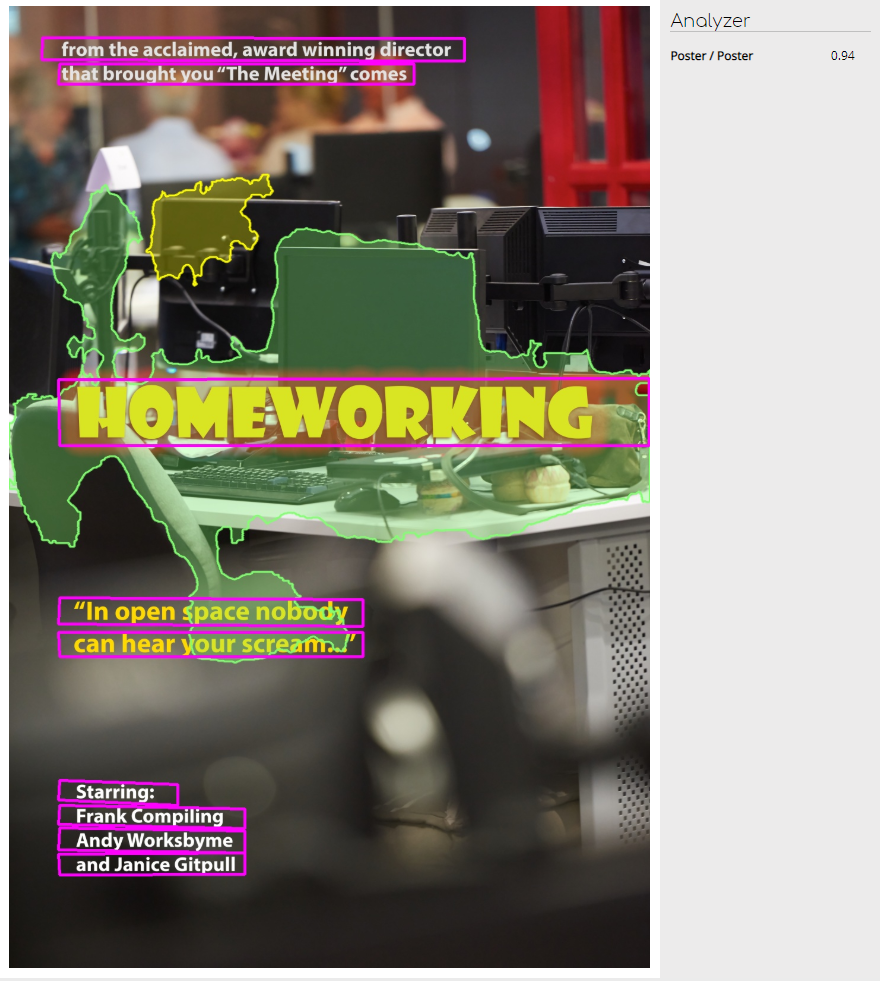

- Filter out posters or branded photos

Automatic photo descriptions and content identification

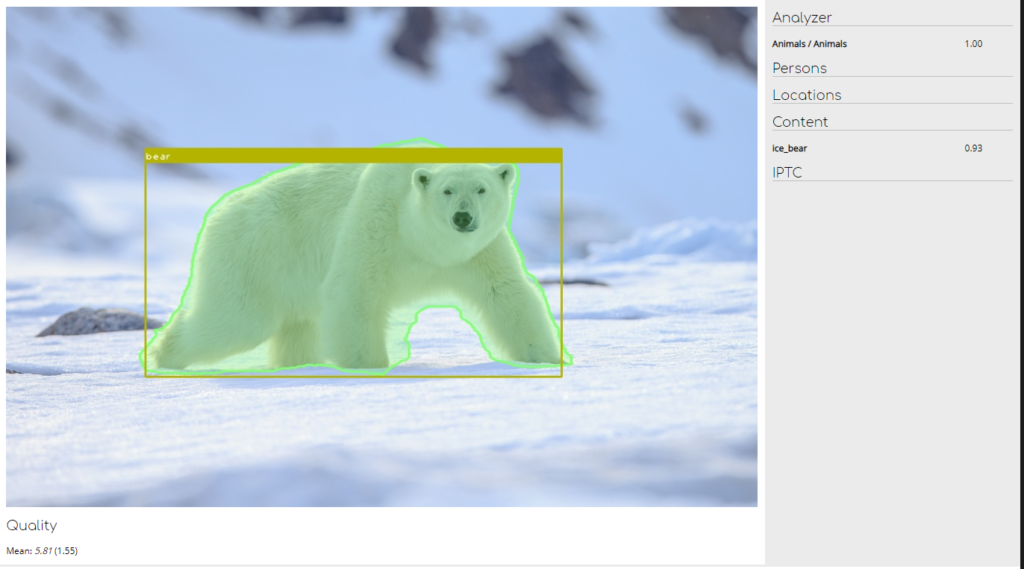

To obtain the maximum possible amount of useful information from a photo, our AI tool applies a number of techniques. Let’s take a look at the results from this photo analysis example:

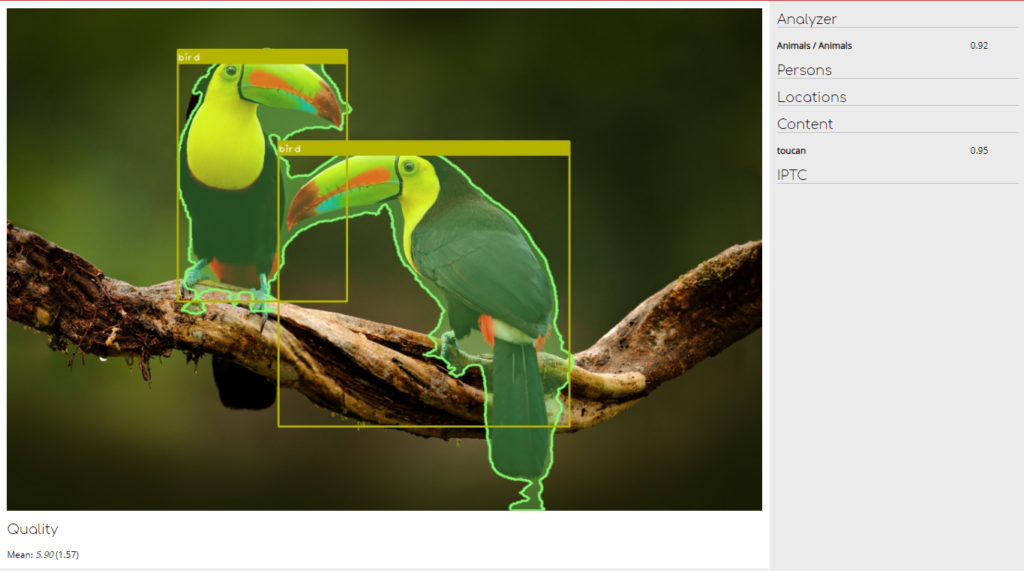

The system not only correctly identified the salient area but also recognized the object as a bear. What is more, the tool also identified that the bear in question is an example of an ice_bear (as seen on the right, the tool gave the result a confidence rating of 0.93). The same goes with the birds:

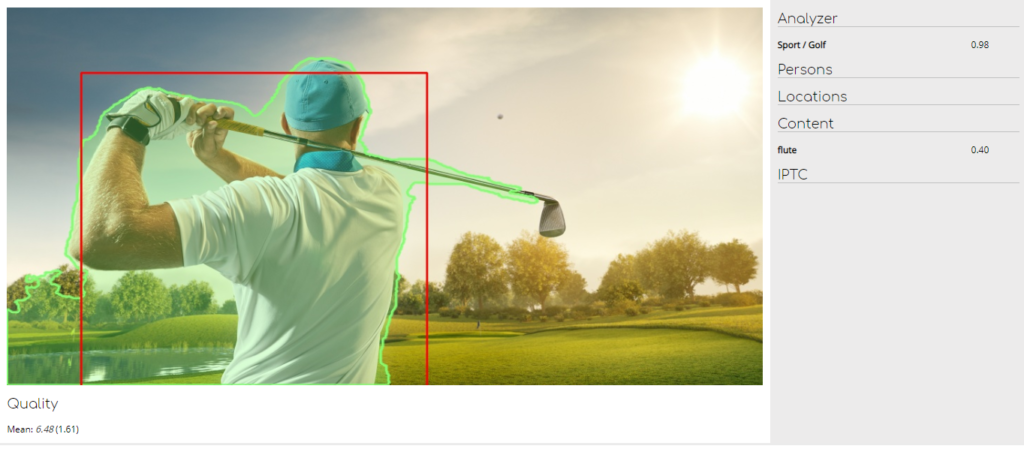

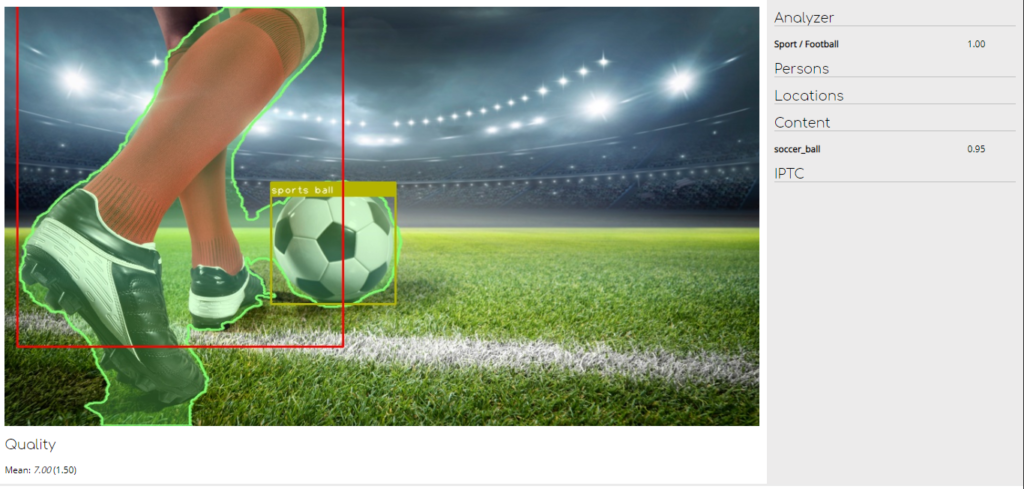

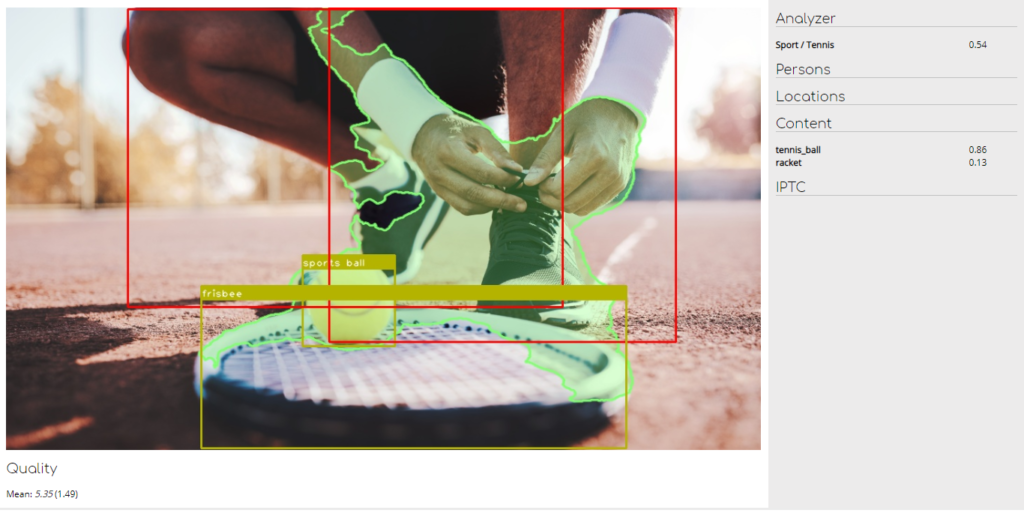

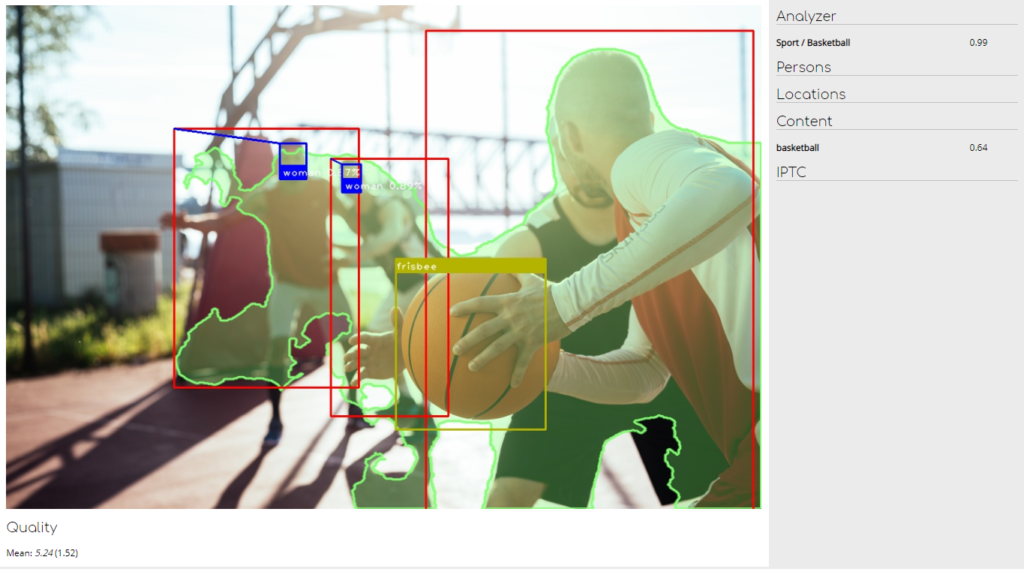

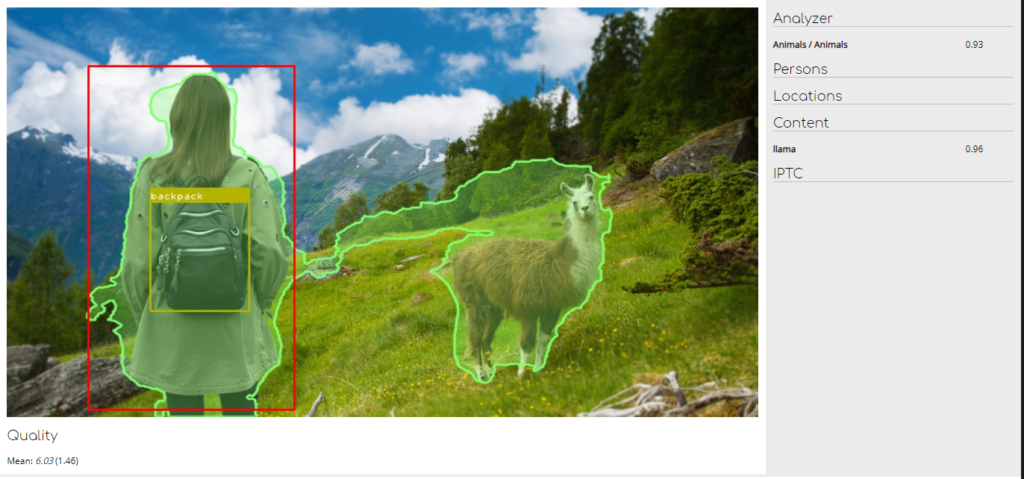

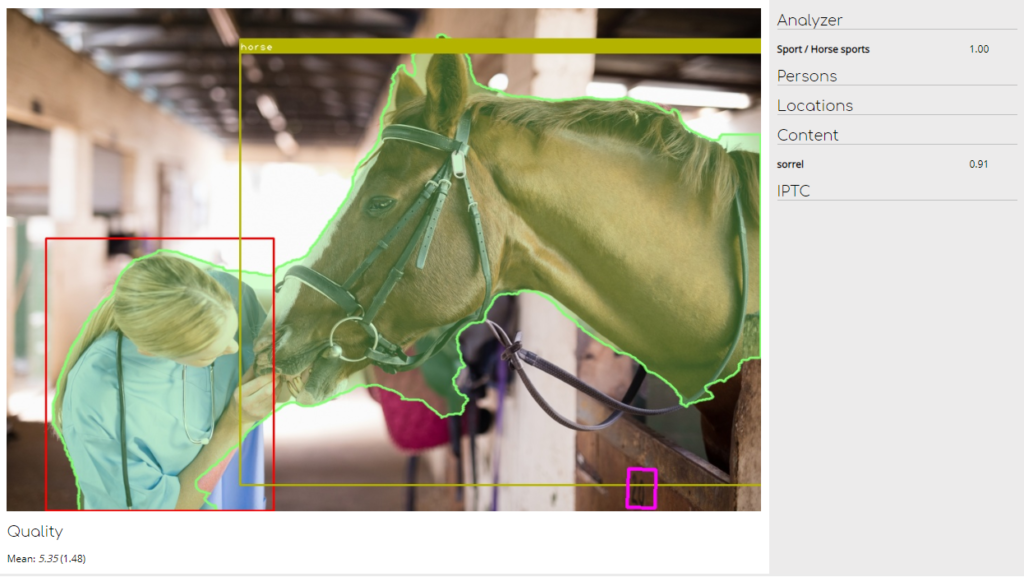

Let’s take four more examples:

We can see here that content analysis results identified the images as sports photos. What’s more, the sports discipline was also identified.

These content detection results can be used as additional keywords when the archive is subjected to a search – however unlikely or outlandish. For example, we can request any images of a particular actor in the company of a llama:

Or a horse:

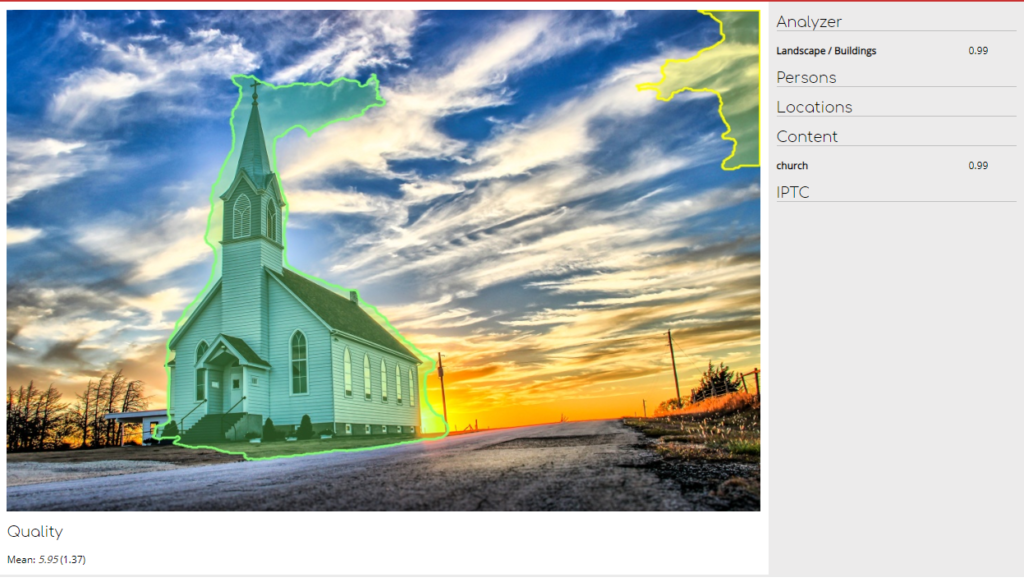

Or we can locate a photo of a church to illustrate the story:

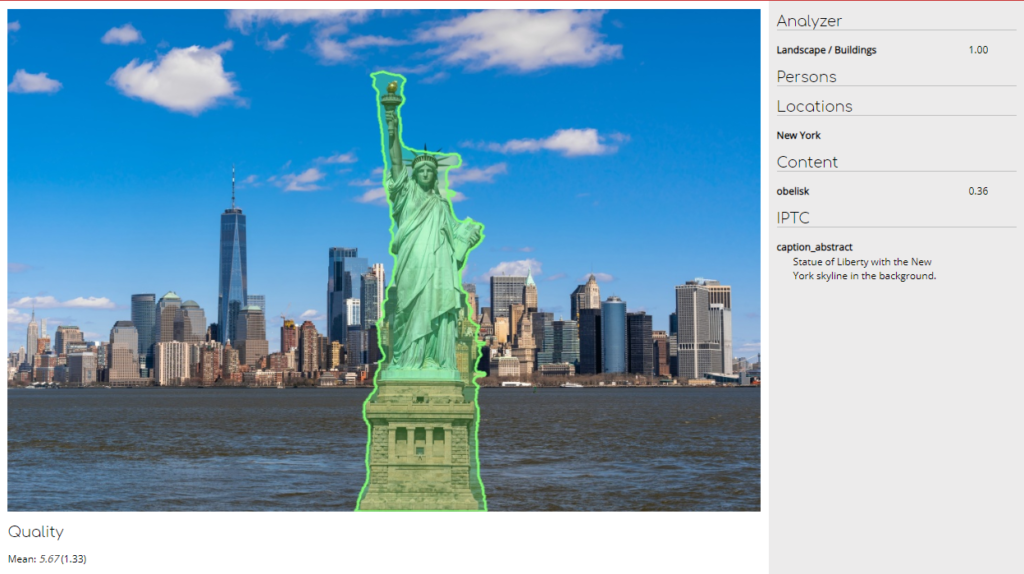

Or a monument:

IPTC/EXPI/XMPP tags function as sources of additional information, and are analyzed by an additional AI-based process in order to identify an object by a generic or specific name, for example in relation to certain geographical locations. In this case, New York is identified.

Identification of erotic material

As photos are usually exported to a variety of customers, sensitivity is required where erotic images are concerned. Our AI tool is able to detect such content on a number of levels. Let’s take a wholly innocent image such as this one:

It is not that our AI tool is overly sensitive or zealous, but it has correctly detected potentially inappropriate or offensive erotic content in this image of a unicorn (as you can see, recognition of mythical creatures is work in hand).

Poster recognition

As part of the automatic photo selection process, it is often important not to select posters. This is especially so if the photo to be exported is intended to act as background for a customer’s UI. Our neural network is able to recognize posters as well:

Facial recognition

Detection of faces is a very useful tool but why not take things to the next level and apply an AI system that matches a person in a photo to their biographical details from metadata records held in the archive? The ability to link information in this way allows us to:

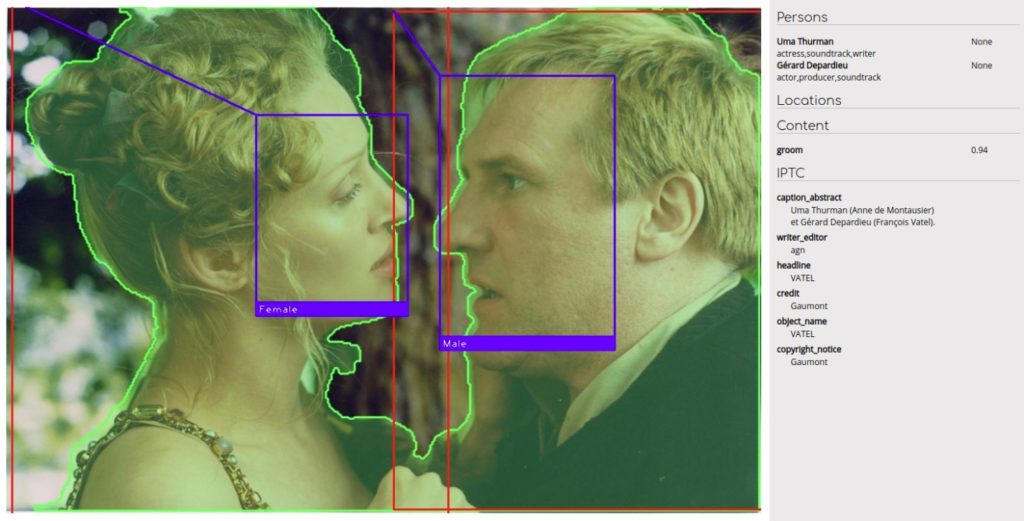

- Automatically generate a simple caption – here it might be: “From left to right: Uma Thurman and Gérard Depardieu”. Alternatively, if the photo is connected to a movie description in HUBERT, we would see: “Uma Thurman as Anne de Montausier, and Gérard Depardieu as François Vatel”.

- Search for a person, from cast and crew information held in the metadata database.

All such results are double-checked against the global IMDB database – and that means that additional information about the people identified can be included in context. Here, the IMDB ID is delivered along with the photo:

Automatic cut-outs

It takes a highly-skilled Photoshop operator considerably more than a few minutes to manually apply a cut-out of a person in any given image and to manipulate the object on the page ready for text and other graphics to be arranged accordingly. It also requires the requisite Photoshop licence. But wouldn’t it be better and easier if the AI tool gave us a helping hand to generate a cut-out image in an instant?

Our PHOBOSS DAM AI tool is designed to recognize the outline profile of a person in a given photo and enable the editor to fine tune the intricate crop to generate an alpha matte to produce high quality results.

First – an example of something simple:

That was relatively easy because of the absence of hair. What happens with a subject with a fuller crop of hair?

Or with curly follicles?

This alpha-matted image result is suitable for a print product as well as in an online publication. The client can change the background, add floating or wrapping text around the actor or apply various designs to interest the reader.

Summary

Thanks to the hard work of our software engineers and the time we have invested gathering expertise in AI/Machine learning area, Media Press is well placed to offer its clients an innovative tool to help them reduce costs associated with handling large quantities of photos. Our offer has proved attractive to new clients from the emerging market sectors. We are keen to hear from any media enterprise struggling to keep on top of its expanding archive of image assets.

The development of an AI based editorial service creates new opportunities. Right now, we are looking at ways of analyzing videos and using language processing techniques to devise exciting ways of utilising our enormous content database.

No Comments